▶ Listen to Today’s Briefing

OpenAI Amends Pentagon Contract to Prohibit Domestic Surveillance

OpenAI has added explicit language to its Department of War agreement barring use of its AI systems for domestic surveillance of U.S. persons, including through commercially acquired data. The amendment also clarifies that intelligence agencies such as the NSA would require a separate contract modification before accessing OpenAI services. Sam Altman acknowledged the original announcement was rushed, calling it a learning experience ahead of higher-stakes decisions.

This follows the supply chain risk designation concerns covered in yesterday’s issue. The self-imposed constraints are meaningful, but they are contractual rather than technical. Watch whether Anthropic receives equivalent terms and how regulators interpret “deliberate tracking” when AI-generated inferences, rather than raw data, are the output.

LLMs Can Unmask Pseudonymous Users at Scale

Researchers have demonstrated that large language models can de-anonymize pseudonymous online users by analyzing writing patterns and behavioral signals, with accuracy levels that make the threat practical at scale. The finding is not merely academic: pseudonymity is the primary privacy mechanism for millions of users across forums, social platforms, and whistleblower communities. If LLMs can pierce that veil reliably, the effective anonymity surface of the internet shrinks considerably.

The timing matters given the OpenAI-Pentagon story above. An administration with access to frontier models and large text datasets now has a capability question to answer, not just a policy one. Researchers and security practitioners should treat pseudonymity as a degraded protection and plan accordingly.

Anthropic Opens Claude Memory to Free Users and Targets Switchers

Anthropic has extended Claude’s memory feature to free-tier accounts and added import tools that allow users to bring conversation history from ChatGPT and other chatbots directly into Claude. The tactical intent is clear: reduce the switching cost that has kept users locked into whichever assistant they adopted first. Memory persistence has been one of the more requested features across the chatbot market, and making it free removes a meaningful barrier.

Combined with the voice mode now available in Claude Code, Anthropic is making a coordinated push on both consumer and developer fronts simultaneously. The import tooling is the more strategically interesting piece: it treats rivals’ conversation history as a recruiting asset rather than a moat.

Google Releases Gemini 3.1 Flash-Lite for High-Volume Inference

Google has released Gemini 3.1 Flash-Lite, a lightweight model built for fast, low-cost inference at scale, targeting on-device applications and resource-constrained deployments where latency and compute cost matter more than maximum capability. The release arrives alongside new Gemini agentic features on Pixel phones that let the assistant order groceries and book rides autonomously, marking a shift from information retrieval toward task execution in Google’s consumer products.

The combination of a cost-efficient inference model and autonomous consumer agents reflects Google’s two-front strategy: compete on price and efficiency at the infrastructure layer while establishing behavioral precedents for agentic AI with hundreds of millions of Pixel users. Neither development is isolated.

Santander and Mastercard Complete Europe’s First AI-Executed Payment

Santander and Mastercard completed a live pilot in which an AI agent initiated and settled a payment within a regulated banking network without human intervention. The transaction is notable not for its size but for its context: it occurred within production financial infrastructure, not a sandbox, establishing that autonomous AI agents can operate within existing compliance frameworks at least in controlled conditions.

The harder questions begin now. Who bears liability when an agent executes a fraudulent or erroneous transaction? How do existing AML and KYC obligations apply when no human authorized the specific payment? European regulators will be watching this pilot closely, and the answers will shape whether AI-executed finance scales or stalls at the proof-of-concept stage.

Analysis

The Benchmark Problem: Why Coding Tests Tell Us Little About AI Progress at Work (4 min read)

Ethan Mollick points to new research showing that the overwhelming majority of AI evaluation effort flows into coding benchmarks, even though software development represents a narrow slice of the actual labor market. This creates a systematic blind spot: organizations trying to assess whether AI tools will improve their specific workflows have almost no rigorous external data to draw on outside the technical domain. Progress looks faster than it is in some areas and slower than it is in others, and it is genuinely hard to know which.

The practical consequence for anyone making AI investment decisions is that vendor claims backed by benchmark scores should be treated with significant skepticism unless those benchmarks closely resemble the actual tasks being automated. The research linked in Mollick’s post is worth reading in full for anyone building an internal AI evaluation framework.

How Photoroom Trained a Production Text-to-Image Model in 24 Hours (8 min read)

Photoroom’s engineering team has published the third part of their PRX series, detailing how they trained a production-quality text-to-image model from scratch within a single day using LoRA fine-tuning and synthetic data generation. The write-up is unusually detailed for a company blog post, covering dataset construction, training dynamics, and the specific efficiency choices that made the timeline achievable. The result is a model tuned for Photoroom’s specific commercial photography use case rather than general-purpose generation.

The significance here extends beyond one company’s workflow. If competitive image generation models can be trained in 24 hours on targeted data, the assumption that large foundation models will remain the default for specialized creative applications deserves reexamination. Domain-specific fine-tuning at this speed changes the cost-benefit calculation for any business with a well-defined visual style or product category. The Hugging Face post includes enough technical detail to be directly useful to practitioners considering similar approaches.

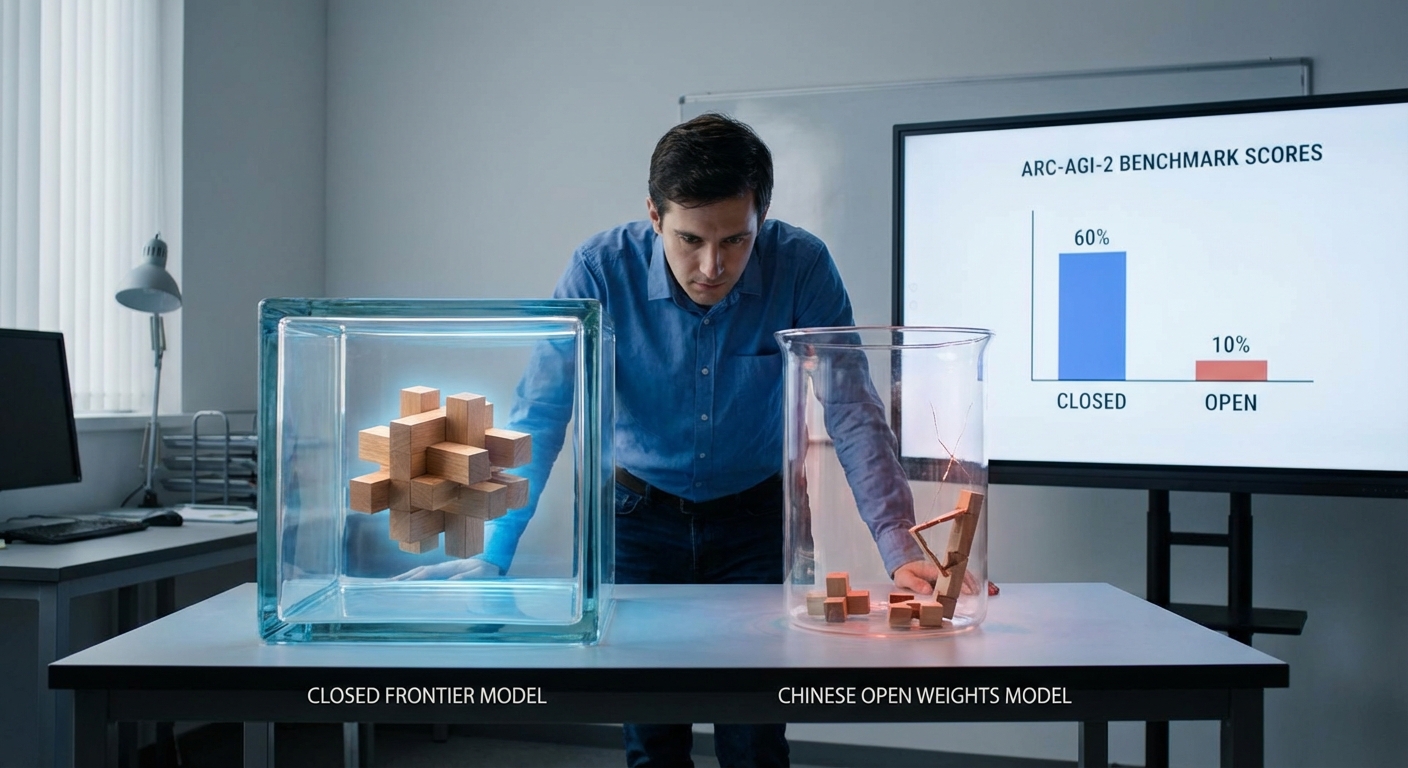

Chinese Open-Weight Models Show Sharp Limits on General Reasoning (4 min read)

New ARC-AGI-2 benchmark results show Chinese open-weight models including Kimi K2.5, Minimax M2.5, GLM-5, and DeepSeek V3.2 scoring between 4 and 12 percent, well below frontier closed models from OpenAI, Anthropic, and Google. ARC-AGI-2 is specifically designed to resist pattern memorization and test novel problem-solving, which makes the gap harder to dismiss as a benchmark artifact. These models perform competitively on many narrow tasks, but the results suggest their strength does not generalize.

For organizations evaluating open-weight models as a cost-saving alternative to API access, this data points to a specific risk: strong in-distribution performance can mask fragility on the edge cases that matter most in production. The strategic implication for the broader competitive landscape is that Chinese labs are not yet close to matching frontier general reasoning, which preserves a meaningful capability advantage for Western closed-model providers in the near term.

From the Field

A Musician Built an AI Clone of Her Voice for Public Use

A musician has created an AI voice clone of herself and made it available for others to use, framing the decision as a deliberate choice to own the terms of her own replication rather than cede that control to third parties. The Reddit discussion that followed is more interesting than the headline: practitioners are wrestling with whether voluntary consent fundamentally changes the ethics of voice cloning, and what it means for the broader market when artists begin licensing their synthetic selves as a revenue stream.

For anyone working in audio production, podcasting, or voice-driven applications, this case illustrates a coming norm negotiation. ElevenLabs and similar platforms have created the infrastructure; the question of who controls an individual’s voice and on what terms is now a practical business question, not a hypothetical one.

OpenClaw Beta v2026.3.2 Drops with Major Updates

The OpenClaw agent framework released a significant beta, with developers reporting the update as substantial enough to warrant immediate testing. The one-line install command and agent-driven update path signal a project that is maturing past hobbyist infrastructure toward something closer to a deployable tool. Practitioners in the agentic workflow space are treating this as a meaningful release cycle, not a minor patch.

OpenClaw is worth monitoring for anyone building on open agent frameworks as an alternative to proprietary orchestration layers. If the beta holds up under community testing, it could become a reference implementation for teams that want agentic capability without vendor lock-in.

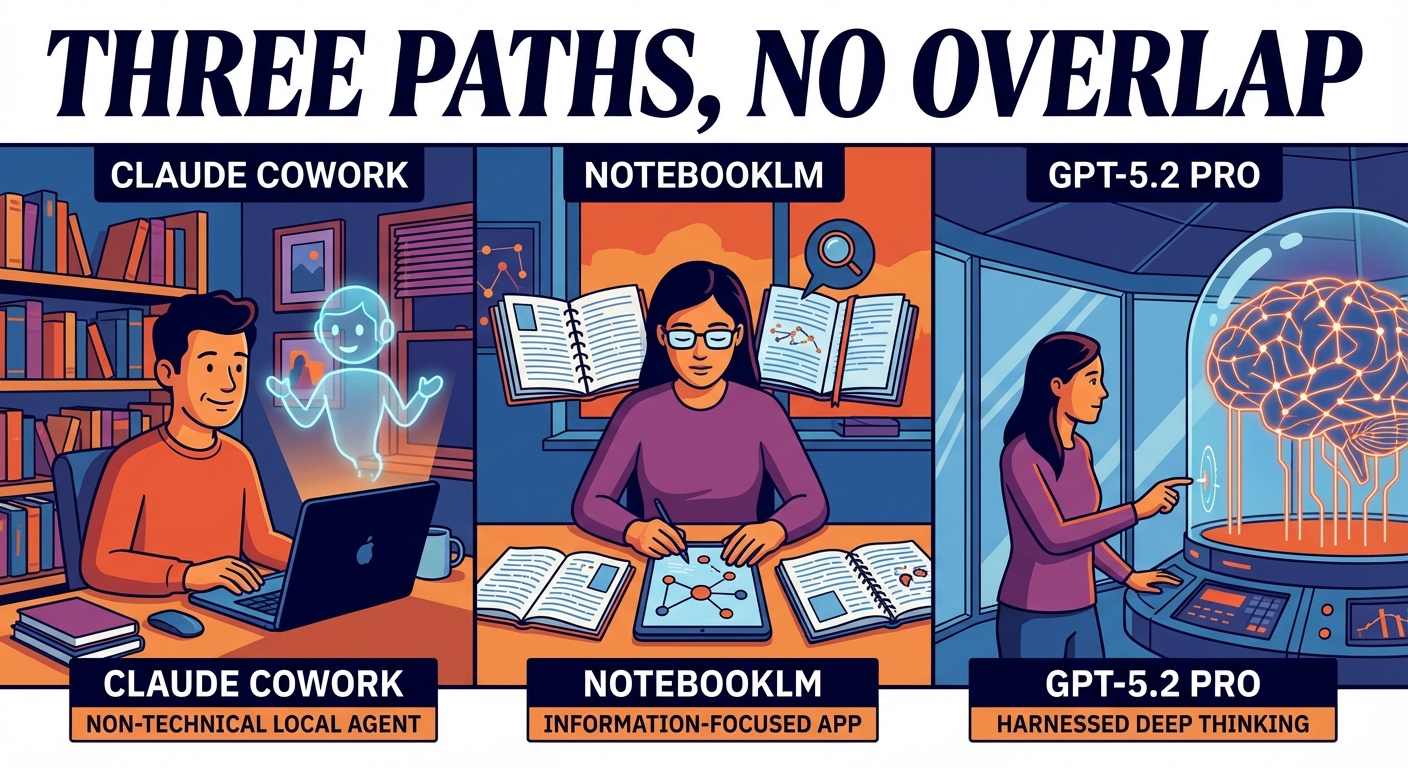

The Product Differentiation Map of the Major AI Labs

Mollick’s observation that Claude Cowork, NotebookLM, and GPT-5.2 Pro each occupy genuinely distinct positions with no direct competitor is generating real discussion among practitioners. The point is not just academic: if you need a non-technical local agent, there is currently one option. If you need deep reasoning on hard problems with a proper harness around it, there is one option. This is not a market with meaningful redundancy at the product layer yet.

The practical implication for teams building workflows on top of these products is that switching costs are higher than they appear. Choosing a platform now is partly choosing a product roadmap, and the fragmentation means you cannot easily substitute one for another when a specific capability is central to your use case.

Voices

@emollick notes that Grok cannot reliably determine whether an image or video is AI-generated, yet provides confident definitive answers when asked, and that this failure is not unique to Grok: no visual LLM currently has this capability. The observation cuts through a significant amount of marketing noise. Several tools claim AI detection as a feature, and this is a direct challenge to that claim backed by documented error patterns. Anyone using visual LLMs as a verification layer in a content moderation or editorial workflow should treat this as a prompt to audit their assumptions.

@emollick maps AI capability history to four user-facing leaps: ChatGPT in November 2022, GPT-4 in spring 2023, reasoning models culminating in o3 in spring 2025, and workable agentic systems in December 2025. The framing is useful precisely because it focuses on what changed for users, not what changed in model architecture. The fourth leap, functional agentic systems, is the one most organizations are still in the early stages of absorbing, which suggests the productivity impact of the current generation of tools is still largely ahead of most users rather than behind them.

@steipete retweeted a report that Codex hit an all-time high in requests per second before this week’s major releases had even shipped, with OpenAI noting GPUs are on standby. The data point matters because it shows demand for AI code generation is still accelerating, not plateauing, even as the product matures. For infrastructure teams, it is a signal about the capacity planning assumptions baked into AI-adjacent services.

Business Intelligence

Small Business

Anthropic’s decision to extend Claude’s memory to free users and add conversation import tools is the most directly useful development of the day for a small business owner. If you have been using ChatGPT and accumulating useful conversation context, you can now bring that history into Claude without starting from scratch. The practical effect is that switching costs just dropped, and it is worth spending an hour testing whether Claude’s current capabilities fit your workflows better than what you are using today.

The Santander and Mastercard AI payment pilot deserves attention even if your business has nothing to do with banking. Autonomous AI agents executing financial transactions within regulated infrastructure is the infrastructure layer being built underneath the next generation of business automation. Within two to three years, the question of whether an AI agent can handle routine supplier payments or expense processing on your behalf will be a product decision, not a technical impossibility. Getting familiar with agentic tools now, starting with lower-stakes tasks, is the preparation that will matter.

The LLM de-anonymization research has a specific implication that most small business owners have not considered: if you or your employees use pseudonymous accounts to gather competitive intelligence, monitor industry forums, or participate in sensitive professional communities, that cover may be considerably thinner than assumed. This is not a theoretical risk. It is a capability that exists now and will only improve.

Mid-Market

Google’s Gemini 3.1 Flash-Lite release signals continued price pressure on AI inference. If your team is currently paying for API access at mid-tier model pricing, the arrival of capable lightweight models from Google and others will create renegotiation leverage with your current vendors within the next two quarters. Build that into your procurement calendar rather than waiting for contracts to renew on their own schedule.

Mollick’s observation about product differentiation across the major labs has a direct operational implication for scaling companies. If Claude Cowork is genuinely the only non-technical local agent and your operations team needs that capability, you are currently in a single-vendor position for that specific function. That is worth naming explicitly in your vendor risk register, even if the risk is manageable. The same applies to any team that has built critical workflows around NotebookLM or GPT-5.2 Pro’s deep reasoning capabilities.

The benchmark research Mollick flagged should change how your team evaluates AI tools internally. If you are relying on published benchmark scores to inform procurement decisions for anything outside software development, you are working from data that may not reflect your actual use case at all. The investment in building even a lightweight internal evaluation process, using representative samples of your own work, will pay for itself in avoided poor vendor decisions.

Enterprise

The OpenAI-Pentagon contract amendment is the governance story of the week, and it has direct implications beyond defense contracts. The explicit prohibition on using AI for domestic surveillance through commercially acquired data is a contractual commitment that sets a precedent other enterprise customers can reference in their own vendor negotiations. If your legal team is drafting or reviewing AI vendor agreements, this language is now a public benchmark for what is achievable. Push for equivalent or stronger protections in your own contracts, particularly around data use and inference outputs rather than just raw data handling.

The LLM de-anonymization research creates a compliance surface that most enterprise legal and privacy teams have not mapped. If your organization operates any systems where employees, customers, or partners interact pseudonymously, and those interactions are captured in text, the assumption of anonymity may not survive contact with a capable language model. This is relevant to whistleblower programs, internal feedback mechanisms, and any externally facing forum or community. A privacy impact assessment specifically addressing writing-pattern inference risks is worth commissioning before it becomes a regulatory question someone else asks first.

The Santander and Mastercard pilot will accelerate board-level conversations about AI agents in financial workflows. Before those conversations arrive, your team needs clear answers to three questions: what categories of financial transaction could an AI agent execute on the organization’s behalf under current authorization frameworks, what audit trail requirements exist for agent-initiated transactions, and which existing controls assume human authorization in a way that would need to be rearchitected. The technical capability is moving faster than the governance frameworks in most large organizations, and the gap is where liability accumulates.

In Brief

- GPT-5.3 Instant Drops Patronizing Phrasing. OpenAI updated its instant model to remove phrases like “calm down” after sustained user criticism that the behavior made interactions feel condescending rather than helpful.

- Claude Code Adds Voice Mode. Anthropic’s coding assistant can now accept voice commands, giving developers a hands-free interaction option that competes directly with similar features from OpenAI and Google.

- Gemini Takes Autonomous Actions on Pixel Phones. Google’s March Pixel drop enables Gemini to order groceries, book rides, and execute other tasks autonomously, extending the assistant from information retrieval into direct task completion.

- OpenAI Publishes GPT-5.3 Instant System Card. The safety and capabilities documentation for GPT-5.3 Instant is now public, providing technical detail on the model’s behavior constraints and known limitations for enterprise evaluators.

- Competitors Likely to Challenge Claude Cowork Soon, Gaps Remain. Mollick expects rival labs to launch local agent products comparable to Claude Cowork in the near term, though capable Excel and PowerPoint agents remain an unsolved problem across the field.

- OpenAI Frames GPT-5.3 Instant as Everyday Conversation Upgrade. OpenAI’s product post positions the new model around smoother, more natural daily interactions rather than capability gains on hard tasks, reflecting a deliberate focus on retention over benchmarks.

- Chinese Open-Weight Models Score 4 to 12 Percent on ARC-AGI-2. Results from the general reasoning benchmark confirm that leading Chinese open-weight models lag significantly behind frontier closed models on out-of-distribution problem-solving.

Tool of the Day

Photoroom’s PRX training pipeline is a documented, reproducible approach for teams that need to train a domain-specific text-to-image model without a large compute budget. It is aimed at ML engineers and applied researchers working on commercial visual applications, particularly in product photography, e-commerce, and brand asset generation. A concrete use case: a retailer with a consistent visual style and a catalogue of product images could use the PRX approach to train a model that generates on-brand visuals matching their existing photography without ongoing prompting workarounds. The Hugging Face post covers the full pipeline in enough detail to serve as a working blueprint rather than a summary.