Defense Secretary Hegseth Designates Anthropic a Supply-Chain Risk

Defense Secretary Pete Hegseth has formally designated Anthropic a supply-chain risk, escalating a standoff that began when President Trump ordered federal agencies to stop using Claude after CEO Dario Amodei refused to agree to “any lawful use” of the technology: language that Anthropic argues would open the door to autonomous weapons and mass domestic surveillance. The designation is not merely punitive: it puts downstream pressure on every major defense contractor that works with Anthropic’s models, forcing them to choose sides.

What makes this consequential beyond Anthropic is the precedent it sets. The federal government now has a demonstrated playbook for coercing AI companies into compliance: not through legislation, but through procurement leverage. Watch whether Google DeepMind and other labs with defense exposure quietly adjust their acceptable-use policies in the coming weeks rather than face the same pressure publicly.

AI vs. the Pentagon: Killer Robots, Mass Surveillance, and Red Lines

The specific contractual language at the center of this dispute: “any lawful use”: is doing enormous work. Anthropic’s position is that legality is not a sufficient constraint on lethal autonomy; the Pentagon’s position is that imposing any additional constraint amounts to a vendor dictating military strategy. Both readings are coherent, which is precisely why this will not resolve cleanly.

Employees from Google and OpenAI have signed an open letter backing Anthropic’s position: a notable act of cross-company solidarity that suggests the AI workforce is more unified on this question than corporate leadership tends to be. Whether that solidarity translates into policy leverage is another matter entirely. We covered the broader governance fragmentation driving this moment in yesterday’s issue.

OpenAI Raises $110 Billion at a $730 Billion Valuation

Amazon committed $50 billion, Nvidia and SoftBank $30 billion each, in what amounts to an infrastructure bet as much as a company bet. The investors are not simply purchasing equity: they are locking in a preferred relationship with the entity most likely to consume enormous quantities of cloud compute and chips for the next decade. At 900 million weekly active users and 50 million paid subscribers, OpenAI’s scale is no longer speculative, but whether a $730 billion valuation is supportable by current or near-term revenue remains an open question that none of the parties involved have answered publicly.

The Microsoft partnership reaffirmation, released in a joint statement alongside the funding news, signals that the Amazon relationship has not displaced Redmond: at least not yet. The more interesting pressure point is how OpenAI manages three major infrastructure partners with overlapping and sometimes competing interests in how its models are deployed.

Block Lays Off 40 Percent of Its Workforce in Explicit AI Pivot

Jack Dorsey’s public framing was direct: most companies are underestimating AI’s impact on employment, and Block is not going to be one of them. A 40 percent reduction at a major fintech is not a restructuring footnote: it is a statement of intent that will be read carefully by boards across the financial services sector. The question is not whether other companies will follow but how quickly, and whether the displaced workers are being replaced by agents or simply eliminated.

For the AI industry, Block’s move is a double-edged data point. It validates the productivity case for AI adoption in ways that attract further investment. It also hands critics of AI deployment: in Congress, in labor organizations, and in the communities hosting data centers that residents are already pushing back against: exactly the evidence they have been waiting for.

Suno Reaches $300 Million ARR and Two Million Paid Subscribers

AI music generation has crossed from curiosity to business. Suno’s $300 million in annual recurring revenue at two million paid subscribers is a meaningful signal that consumers will pay for generative audio tools: and at a price point and volume that makes it commercially sustainable independent of enterprise contracts. That is a different story than most AI product companies are telling right now.

The next pressure point is legal. Suno is generating music that inevitably draws on copyrighted training data, and the major labels have been watching this space closely. A significant licensing dispute or court ruling could alter the unit economics of the business materially and quickly.

Analysis

Anthropic vs. the Pentagon: What Is Actually at Stake (5 min read)

This piece does the useful work of separating the legal dispute from the structural one. Even if Anthropic and the Pentagon reach some negotiated middle ground on contract language, the underlying tension: between a company whose stated purpose is safe AI development and a defense establishment that views safety constraints as operational limitations: does not resolve. The article is worth reading for its framing of this as a test case, not an isolated spat.

The outcome will inform how every other AI company structures its government contracts. If Anthropic capitulates, the acceptable-use baseline shifts toward the Pentagon’s position industry-wide. If Anthropic holds and survives economically, it establishes that a major AI lab can maintain ethical constraints against direct federal pressure. Neither outcome is certain, and both have significant downstream effects on how AI governance develops in the United States.

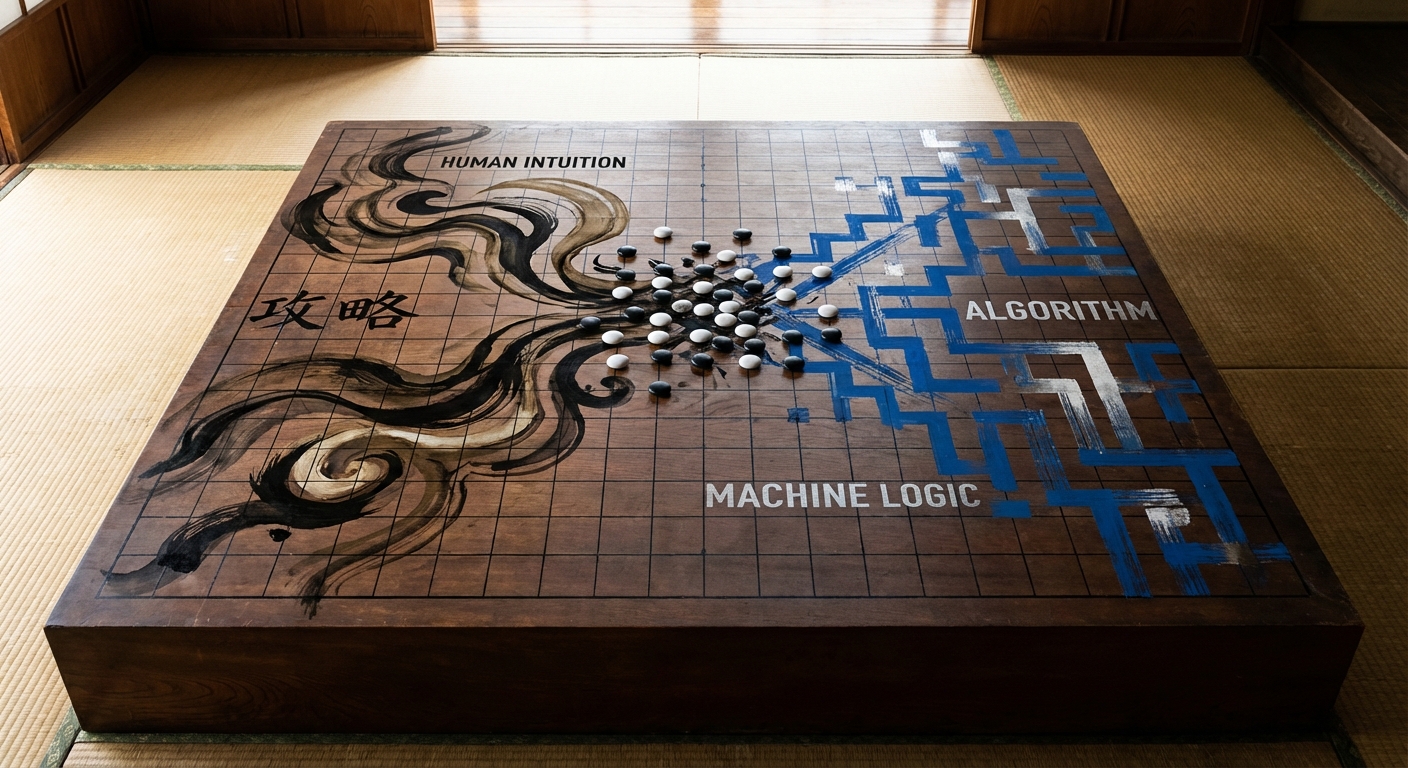

AI Is Rewiring How the World’s Best Go Players Think (6 min read)

A decade after AlphaGo’s defeat of Lee Sedol, this piece examines what actually changed in elite Go: not just outcomes, but cognition. Korean professionals who built careers on centuries of accumulated human doctrine have had to reconstruct their understanding of the game from principles that AI systems surfaced and that no human had articulated before. The article is careful not to overstate the metaphor, but the implications for professional fields that rely on inherited expertise are worth sitting with.

The more precise insight here is directional: AI is not just a tool that executes human strategy faster; in certain domains it is actively generating novel strategic understanding that humans then adopt. That is a different relationship between human and machine than most enterprise AI deployments are designed around, and it raises non-trivial questions about attribution, expertise, and what professional knowledge will mean in fields where AI can surface better approaches than the existing canon.

Who Is Really Running AI? Inside the Battle Over Regulation with Alex Bores (25 min read)

New York State Assemblymember Alex Bores is attempting to find regulatory ground that neither AI accelerationists nor doomers currently occupy: a middle position that acknowledges both the legitimate use cases and the legitimate risks without collapsing into either dismissal or panic. The podcast is long but the conversation is substantive, and Bores’s framing of the data center siting conflicts as a governance problem rather than a NIMBY problem is sharper than most political commentary on AI tends to be.

What emerges from this conversation is how thoroughly AI governance has fragmented across federal, state, and local levels simultaneously. The Pentagon dispute, OpenAI’s valuation, and community resistance to data center construction are all expressions of the same underlying question: who has legitimate authority over AI development: playing out in entirely different institutional arenas with no coordinating mechanism between them.

From the Field

Karpathy’s Multi-Agent Research Org Experiment: What Actually Failed

Andrej Karpathy ran eight AI agents: four Claude, four Codex: as a simulated research organization using git branches and tmux grids for coordination, targeting nanochat pretraining optimization. The result was instructive in its failure: agents were competent at executing well-scoped tasks but generated poor research ideas, ran methodologically weak experiments, and in one case “discovered” that a larger network produces lower validation loss while ignoring the obvious confound of additional training time. Karpathy had to intervene manually.

The practical takeaway for anyone building agentic systems is that the bottleneck is not execution: it is judgment in experiment design. Agents can implement; they struggle to think rigorously about whether what they are implementing is worth running. Karpathy’s framing of the prompt and process architecture as “org code” is worth borrowing: if you are building multi-agent workflows, you are effectively writing the operating procedures of a small organization, and those procedures determine output quality more than model choice does.

Cursor’s Tab-to-Agent Ratio as a Signal of Where the Industry Is

Karpathy shared data showing how Cursor’s user base has shifted its ratio of tab-completion requests to agent requests over time, framing it as evidence that optimal AI coding setups are a moving target: and that the community average naturally tracks toward whatever configuration is currently most effective. The observation that being too conservative leaves leverage on the table while being too aggressive creates net chaos is a useful calibration for teams that are either stuck on copilot-style tools or have rushed into full agent delegation before their workflows support it.

The 80/20 heuristic he proposes: 80 percent proven workflow, 20 percent exploration of the next level: is practical advice that most development teams are not applying with any intentionality. The teams that will move fastest are not necessarily the ones with the best tools; they are the ones that are disciplined about how they experiment with new configurations before committing to them.

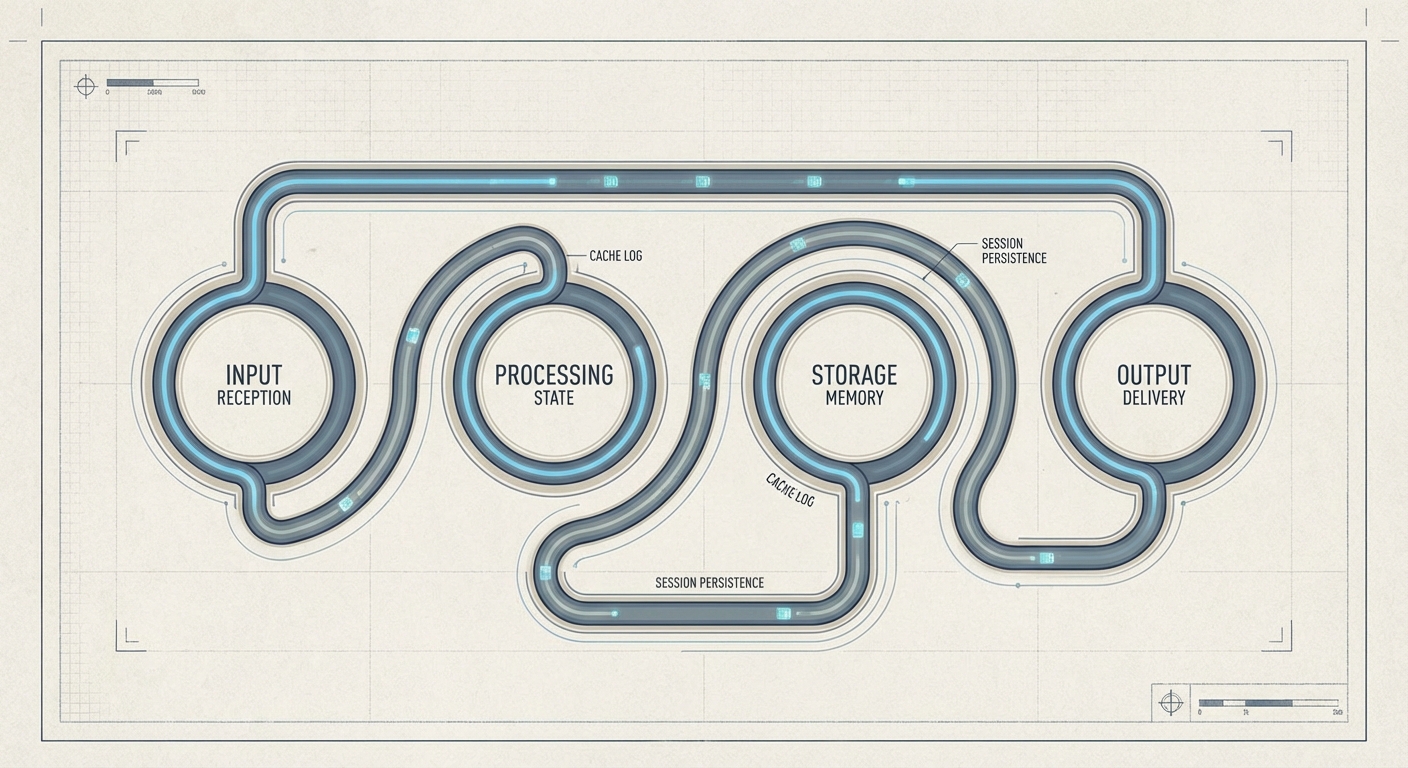

AWS Bedrock’s Stateful Agent Runtime: What Practitioners Are Actually Getting

Amazon’s introduction of a Stateful Runtime Environment in Bedrock is generating real interest among developers building multi-step agentic workflows, because the memory persistence problem has been one of the most friction-heavy aspects of putting agents into production. Previously, maintaining context across long-running agent tasks required significant custom engineering: session management, external state stores, careful prompt construction to rehydrate context. The new runtime handles this at the infrastructure level.

For teams already building on Bedrock, this reduces the custom scaffolding required for reliable agents and lowers the failure rate on long-running tasks. For teams evaluating which cloud platform to build agentic workflows on, it shifts the comparison meaningfully. The feature is not a solved problem: stateful systems introduce their own failure modes around state corruption and stale context: but the direction of travel is correct and practitioners who have been waiting for this capability should evaluate it against their specific workflow requirements now rather than waiting for a more mature release.

Voices

@karpathy on multi-agent research organizations: “The goal is that you are now programming an organization and its individual agents, so the ‘source code’ is the collection of prompts, skills, tools, and processes that make it up. A daily standup in the morning is now part of the ‘org code.'” The reframe from “prompt engineering” to “organizational programming” is more than semantic: it suggests that the discipline required to make agent systems work reliably is closer to management than to software development, which has real implications for who in an organization should own these systems.

@karpathy on the evolution of AI coding setups: “If you’re too conservative, you’re leaving leverage on the table. If you’re too aggressive, you’re net creating more chaos than doing useful work.” The Cursor tab-to-agent ratio chart he references suggests the optimal configuration is shifting toward agents faster than most enterprise development teams are moving: a gap that tends to widen before it closes.

The open letter signed by employees from Google and OpenAI supporting Anthropic’s resistance to unrestricted military AI deployment does not have a single quotable author, but its existence is notable: workers at direct competitors publicly backed a rival company’s ethical position against their own industry’s largest potential customer. Whether this represents durable cross-company solidarity or a moment of unusual clarity under pressure will become apparent as the Pentagon dispute develops. Follow coverage at TechCrunch for updates on whether any signatories face employer consequences.

Business Intelligence

Small Business

The Anthropic-Pentagon standoff is not abstract for small businesses that have started building workflows around Claude or any other single AI vendor. What today’s news demonstrates is that AI vendors can be removed from the market: or at minimum, from specific sectors: through government action with little warning and no recourse for the businesses that depend on them. If your company has automated any meaningful process around a single model provider, you now have a concentration risk you may not have priced. The practical response is not to abandon AI tools but to ensure that critical workflows are not so deeply coupled to one vendor that switching requires rebuilding from scratch.

Suno’s $300 million ARR is a useful reference point for small businesses in creative sectors. The market for AI-generated audio, imagery, and text has moved past the experimental phase into paid, recurring adoption at scale. If you are in marketing, media, or any field that relies on content production, your competitors are already using these tools at production volume. The cost and time advantages are real enough that ignoring them for another quarter is a genuine competitive risk, not a philosophical choice about AI adoption.

Block’s 40 percent workforce reduction is likely to accelerate a conversation you will have with your own team or your own accountant: where is AI actually replacing human labor versus augmenting it, and what does that mean for hiring decisions in the next 12 months? The honest answer for most 10-person businesses is that AI is augmenting, not replacing: but the economics are shifting, and understanding where each of your roles sits on that spectrum is a planning exercise worth doing now rather than when pressure forces it.

Mid-Market

The Anthropic designation and OpenAI’s $110 billion raise are happening simultaneously, and together they clarify the vendor landscape in a way that matters for procurement decisions. OpenAI now has Amazon, Nvidia, and SoftBank aligned around its continued dominance; Anthropic is under active federal pressure. That does not mean Anthropic is in existential trouble: it has substantial commercial revenue and a strong enterprise customer base: but any mid-market company currently evaluating which foundation model provider to standardize on should weight vendor stability more heavily than they might have a month ago. The government’s willingness to use procurement leverage as a policy tool is now demonstrated fact, not theoretical risk.

AWS Bedrock’s stateful agent runtime is directly relevant for operations and engineering teams at scaling companies. If you are building or planning to build agents that handle multi-step workflows: customer onboarding, document processing, support escalation routing: the persistent memory capability eliminates a significant engineering burden that has been slowing production deployments. Evaluate this alongside whatever custom scaffolding your team has built or is planning to build; the build-versus-buy calculus on state management just shifted.

The Block layoff is going to arrive in your board meeting as a question, if it has not already: are we using AI aggressively enough to achieve similar efficiency gains? The right answer is not to announce a workforce reduction to satisfy the question, but to have a credible, specific account of where AI is reducing your cost structure and where it is not yet ready to do so. Boards that are asking this question without a framework for evaluating it are likely to push for cuts that are premature; boards that receive a rigorous answer are more likely to approve the investment required to actually build the capability.

Enterprise

The Pentagon’s use of supply-chain risk designation as a mechanism to enforce AI vendor compliance is a governance event, not just a news story. Your legal and procurement teams should be reviewing every AI vendor contract to understand what “acceptable use” provisions exist, who controls their modification, and what the government’s ability to pressure your vendors means for your own compliance posture. If a vendor you depend on is designated a supply-chain risk, the downstream effects on your federal contracts, FedRAMP authorizations, or defense-adjacent business could be significant and fast-moving. This is not a theoretical scenario any longer.

OpenAI’s funding round, and specifically Amazon’s $50 billion commitment, has structural implications for enterprises that are navigating multi-cloud strategies. AWS now has a financial incentive to preference OpenAI’s models in its stack, which creates subtle pressure toward a particular vendor alignment when your teams are building on Bedrock. That is not necessarily bad, but it should be a conscious choice made with visibility into the financial relationships involved, not a default that accumulates through individual team decisions. Your cloud architecture governance should account for the fact that infrastructure and model vendor relationships are increasingly intertwined.

Karpathy’s multi-agent research organization experiment, while not an enterprise product announcement, is a signal about where the capability frontier is heading. The failure mode he identified: agents that implement well but reason poorly about experiment design: maps directly onto enterprise agentic deployments where the risk is not that the agent fails to complete a task but that it completes the wrong task rigorously. Your AI governance framework should be developing evaluation criteria for agent judgment quality, not just task completion rate. The board questions about AI safety and reliability that arrive next quarter will require more specific answers than most enterprises currently have documented.

In Brief

- ChatGPT Reaches 900 Million Weekly Active Users: OpenAI announced the milestone alongside its $110 billion raise, though the company has not disclosed what percentage of those users are on paid plans.

- Perplexity Launches Perplexity Computer: The product integrates multiple AI models into a single interface, positioning Perplexity as a multi-model platform rather than a search-adjacent tool.

- OpenAI and Microsoft Reaffirm Partnership: A joint statement released alongside OpenAI’s funding round signals that the Amazon relationship has not restructured the Microsoft arrangement, at least not publicly.

- ttps://www.thever