▶ Listen to Today’s Briefing

The Pentagon Has Formally Labeled Anthropic a Supply Chain Risk

The Department of Defense has made official what had been threatened: Anthropic is now designated a supply chain risk, the first American AI company to receive that classification. The designation is particularly striking because the DOD has continued deploying Anthropic’s systems in active operations, including work tied to Iran, even as it stamps the company with a label typically reserved for foreign adversaries and unreliable vendors.

Separately, CEO Dario Amodei appears to still be pursuing a revised deal, and the two sides have returned to the table after talks collapsed over the extent of military access to Anthropic’s systems. The fact that the designation did not end negotiations suggests it was applied as leverage rather than a final judgment, which makes the eventual terms of any agreement worth watching closely. We covered the initial contract collapse and Amodei’s public dispute with OpenAI in yesterday’s issue.

OpenAI Releases GPT-5.4 with Native Computer Use and a 1M-Token Context Window

OpenAI’s GPT-5.4 arrives in Pro and Thinking variants, carrying a one-million-token context window, native computer use, and tighter integration with external tools. The computer use capability is the headline feature: the model can operate applications directly, moving AI from a conversational assistant into something closer to an autonomous operator within a standard desktop environment.

Early practitioner assessments, including from developer Peter Steinberger, suggest coding gains are incremental relative to GPT-5.0 to 5.1, but the unified model performs meaningfully better on documentation, reasoning, and agentic workflows. The 1M-token window combined with computer use is where the practical implications compound: workflows that previously required human handoffs between applications can now run end-to-end. Watch for rapid integration into coding assistants and enterprise automation tooling.

Luma Launches Creative AI Agents Across Text, Image, Video, and Audio

Luma released Luma Agents, a system built on new Unified Intelligence models that coordinates text, image, video, and audio generation to produce complete creative works from a single workflow. This is a meaningful structural shift: rather than requiring a producer to chain together separate tools for each modality, the agent handles orchestration internally.

The practical test will be output coherence across modalities at scale, which has historically been the failure point for multimodal pipelines. If Luma can deliver consistent quality across a full production workflow, it compresses the cost and skill threshold for content production significantly. Creative agencies and marketing teams running lean operations should pay attention to what the early demos show.

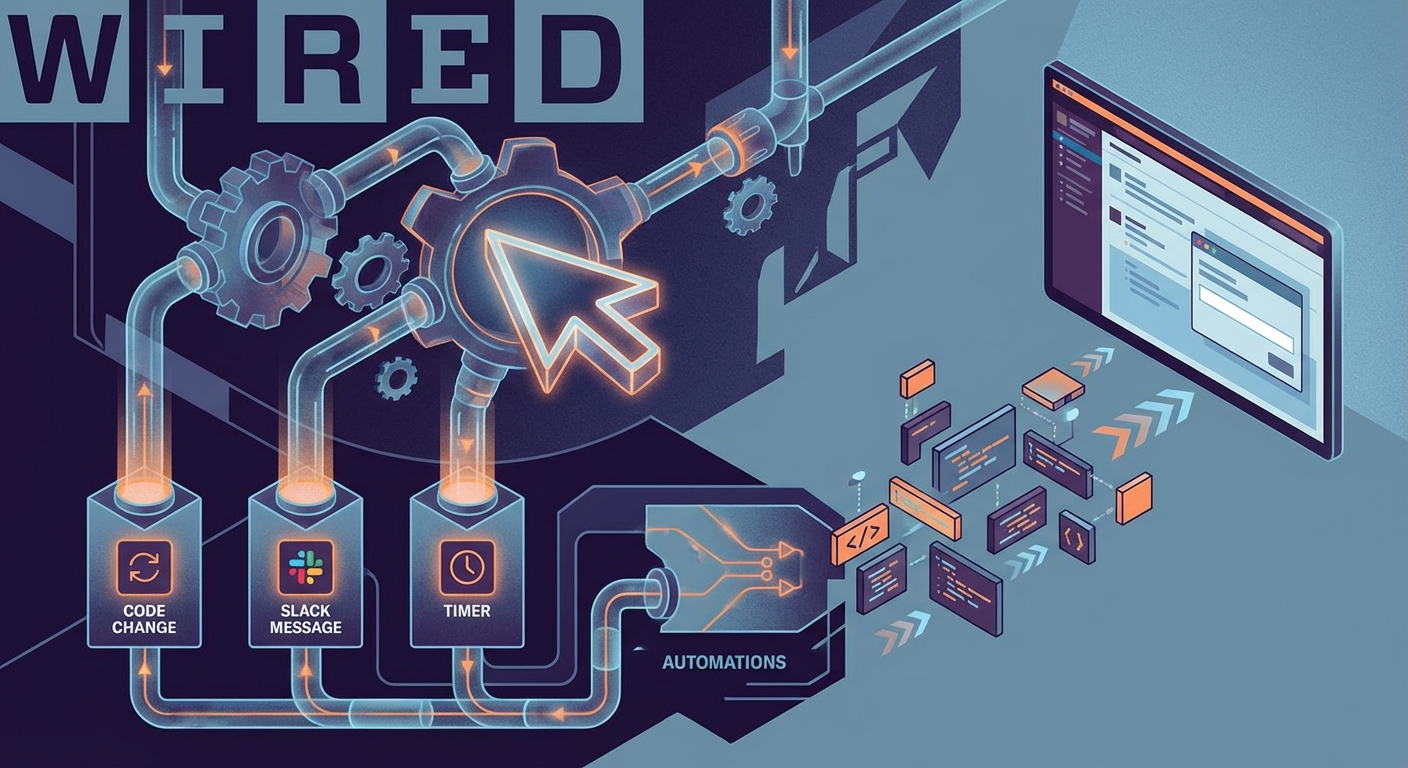

Cursor Launches Automations: Event-Driven AI Agents for Code

Cursor’s new Automations feature moves the product beyond manual prompting by triggering AI agents automatically in response to code changes, Slack messages, or scheduled timers. A developer can now configure an agent to review a pull request, flag regressions, or generate documentation without any human initiation beyond the original setup.

This is the practical shape of agentic coding becoming infrastructure rather than a novelty. The immediate implication for engineering teams is that certain categories of repetitive, rules-based work around code review and documentation can be fully delegated. The longer-term question is how teams will govern and audit decisions made by agents they are no longer actively supervising.

Meta Faces Lawsuit Over AI Glasses Footage Reviewed by Human Contractors

A lawsuit filed against Meta alleges systematic privacy violations in its AI smart glasses program after an investigation by Swedish outlets found subcontractors were reviewing user footage that included bathroom visits and intimate moments, with the review work conducted in Kenya. Users had no indication their recordings were being seen by contractors outside the United States, and the company’s marketing positioned the product around user control.

The gap between what wearable AI products promise and what their data pipelines actually do is not unique to Meta, but this case makes the exposure concrete. The legal risk is significant, and the reputational damage in European markets, where data protection enforcement is aggressive, could be substantial. Any company building AI products that capture ambient video or audio should treat this case as a stress test for its own disclosure and contractor oversight practices.

Analysis

Jensen Huang Says Nvidia Is Pulling Back from OpenAI and Anthropic. The Reasoning Is Incomplete. (4 min read)

Nvidia CEO Jensen Huang confirmed at Morgan Stanley’s TMT Conference that the company is unlikely to make additional investments in OpenAI or Anthropic beyond its current stakes. His stated rationale centers on market saturation and competitive dynamics, but the explanation leaves significant gaps. Nvidia sells chips to nearly every AI lab simultaneously, which makes deep equity alignment with any single frontier model company a liability rather than an asset as competition intensifies.

The less obvious reading is that regulatory scrutiny of vertical integration in the AI stack is increasing, and Nvidia holding meaningful equity in its largest customers creates antitrust surface area. There may also be liability considerations: as AI companies take on defense contracts and operate in higher-stakes domains, equity relationships with the hardware provider become more complicated. The statement is worth watching not for what Huang said, but for what structural shift it may be signaling about how the infrastructure layer positions itself relative to the model layer going forward.

An Open-Source Foundation Model for the Genome, Trained on Trillions of DNA Bases (4 min read)

Researchers have released a large genome model trained on trillions of DNA base pairs that matches or outperforms specialized tools on tasks including gene identification, regulatory sequence detection, and splice site prediction. The open-source release means any research institution with standard computational resources can now access genome interpretation capabilities that previously required either proprietary tools or significant in-house model development.

The implications extend beyond academic research. Drug discovery workflows that depend on genomic analysis become faster and cheaper when a general-purpose foundation model handles the heavy lifting that once required custom pipelines. This is the same pattern that played out in language and image understanding: a capable open foundation model lowers the barrier for applied work dramatically. Biotech companies and research hospitals that have been waiting for reliable open genomics tooling should evaluate this release against their current stack.

Netflix Acquires InterPositive, Targeting AI-Assisted Post-Production (2 min read)

Netflix purchased InterPositive, the AI filmmaking company co-founded by Ben Affleck, acquiring 16 engineers focused on tools that analyze and manipulate existing production footage rather than generating synthetic performances. The distinction matters: this is not an investment in AI actors or deepfake-adjacent technology, but in workflow tooling that reduces editing time and cost while keeping human creative decisions intact.

The acquisition fits a pattern of studios moving past the generative AI conversation toward practical production efficiency. Netflix’s content volume makes even modest reductions in post-production time and cost meaningful at scale. The more significant signal for the broader industry is that the talent and tooling for AI-assisted editing is now being acquired rather than built in-house, which suggests the market for production-focused AI companies is entering a consolidation phase.

From the Field

Codex 5.3 at Maximum Reasoning Solved a Six-Month Bug. Lower Reasoning Tiers Would Not.

Developer Mitchell Hashimoto shared a detailed account of using Codex 5.3 at the extended reasoning tier to resolve a GTK4 bug that had defeated both his own efforts and several other frontier models, including Claude Opus 4.6. The $4.14, 45-minute run succeeded specifically because the model read through GTK4 source code directly, something lower reasoning settings and competing models declined to do. The fix was contained to a single file and required only minor cleanup from a human reviewer.

The practical takeaway for engineering teams is that reasoning tier is not a quality knob to turn up by default: it is a deliberate tool for problems where broad context traversal is the bottleneck. Using extended reasoning on every task would be economically irrational. But for high-value, long-standing bugs where the answer is buried in upstream source code, the calculus changes. Teams should identify that category of problem in their backlog and test accordingly.

Jensen Huang on OpenClaw: More Downloads Than Linux Achieved in Thirty Years, in Three Weeks

At the Morgan Stanley TMT Conference, Jensen Huang described OpenClaw as probably the single most important software release ever, citing adoption that surpassed Linux’s cumulative download record in three weeks. The claim, relayed by Huang at a major institutional investor event, signals how seriously the infrastructure layer is positioning AI tooling as a platform-level shift rather than an application-layer story.

Whether or not the download figures hold up to scrutiny, the fact that Nvidia’s CEO is making this comparison in front of institutional investors tells you something about how the AI infrastructure narrative is being constructed for capital markets. Practitioners building on top of OpenClaw should monitor how quickly its ecosystem hardens: rapid adoption curves that outpace documentation and community maturity create real integration risk.

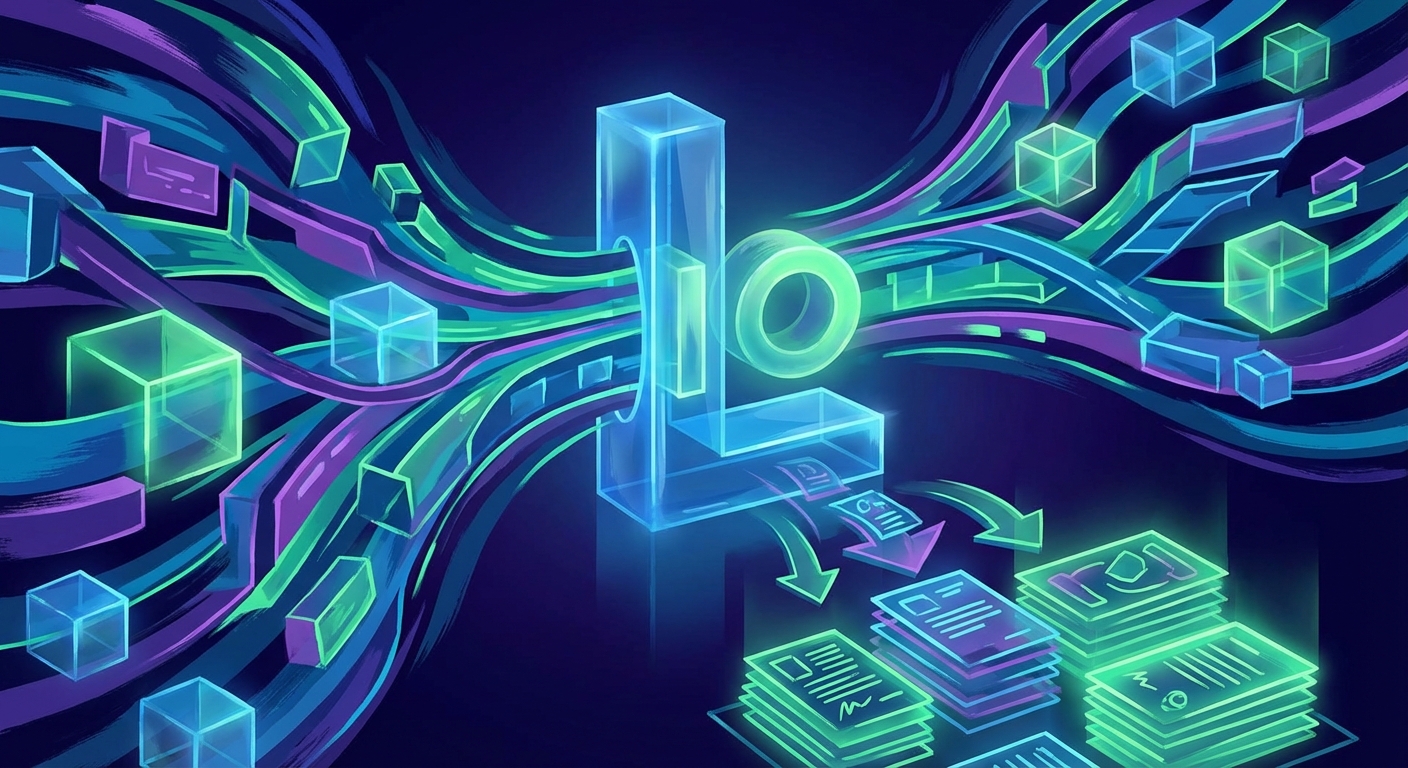

Lio Raises $30M from a16z to Automate Enterprise Procurement

AI procurement startup Lio closed a $30 million Series A led by Andreessen Horowitz to automate purchasing workflows inside large enterprises. The funding validates what procurement teams have known for years: the process is heavily manual, error-prone, and produces enormous amounts of structured data that AI can work with directly. Lio’s traction suggests the category is real and not just a venture narrative.

For practitioners building or evaluating enterprise automation tools, procurement is worth watching because it is a high-frequency, high-stakes workflow with clear audit requirements, which makes it a genuine test of whether AI agents can operate reliably in regulated business processes. If Lio demonstrates that AI can handle procurement end-to-end with acceptable error rates and compliance coverage, the template will spread quickly to adjacent back-office functions.

Voices

@steipete wrote of GPT-5.4: “It’s a good model. The coding-specific jump is more in line with what we had in 5.0 to 5.1, but it’s now unified and smarter on everything else, writes better docs, is a better general-purpose agent, and is overall more pleasant to use.” This is a useful corrective to the launch framing. Practitioners expecting a step-change in raw coding performance will be underwhelmed; those whose workflows depend on reliable documentation generation and general-purpose reasoning will find more to work with.

@TheRundownAI relayed Jensen Huang’s statement that OpenClaw “is probably the single most important release of software, probably ever,” and that it surpassed Linux’s download record in three weeks. The comparison to Linux is a deliberate signal to institutional audiences about platform-level significance, and coming from Nvidia’s CEO at a Morgan Stanley conference rather than a product launch event, it carries a different weight than typical tech hyperbole.

@mitchellh described the Codex 5.3 bug resolution as an “it’s so over” moment, while also noting it “feels amazing because now our next stable release will have this fix.” The dual reaction captures something real about where developer tooling is: the capability is genuinely disorienting, but the practical outcome is simply that a release ships cleaner. That combination of existential unease and immediate productivity gain is increasingly the daily reality for engineers at the frontier.

Business Intelligence

Small Business

Luma Agents and the pattern it represents matters more to small creative businesses than the product itself. If a ten-person agency or production company can generate coordinated text, image, video, and audio outputs from a single workflow, the labor economics of content production shift in their favor relative to larger competitors who have built teams and processes around sequential, tool-by-tool production. The window to experiment is now, before larger players have restructured their own workflows to match.

The Meta smart glasses lawsuit is a warning about a category of risk that small businesses using AI hardware or wearables for customer-facing applications may not have considered. If your AI product captures video, audio, or biometric data and passes any of it to a third-party vendor for training or review, you have privacy exposure that your terms of service may not adequately disclose. That exposure is not hypothetical: it is now in active litigation. Review what data your AI tools collect, where it goes, and what your contracts with those vendors actually say.

Cursor’s Automations feature is relevant for any small business that has a developer or technical co-founder. Event-driven agents that trigger automatically on code changes or messages represent a genuine reduction in the cost of maintaining software quality. If you are running a product with a small engineering team, this category of tool reduces the overhead of code review and documentation without adding headcount. The cost is the time required to configure and govern the agents correctly at the outset.

Mid-Market

GPT-5.4’s native computer use capability deserves a practical assessment from operations leaders before it becomes a board agenda item. The ability to operate applications autonomously across a standard desktop environment means that workflows currently requiring human handoffs between systems are candidates for full automation. Identify two or three high-volume, low-judgment processes in your operations that involve moving data between applications and test them against the new model. The 1M-token context window also means document-heavy workflows in legal, finance, and procurement can now be handled in a single pass.

The Lio funding round is a signal about where enterprise automation investment is concentrating. Procurement is an early target because the data is structured and the ROI is measurable, but the same pattern will move into AP, HR operations, and contract management quickly. Mid-market companies that have not yet built a roadmap for back-office automation risk finding themselves at a cost disadvantage within two to three years as early adopters in their category compress operating costs. The time to build internal capability and vendor relationships in this space is before the category matures and pricing hardens.

The Anthropic-Pentagon situation has a direct implication for any mid-market company that has standardized on Anthropic’s API in a regulated or government-adjacent sector. A supply chain risk designation, even one under active negotiation, introduces procurement uncertainty for customers who are themselves subject to federal contracting requirements or compliance frameworks that reference vendor risk assessments. If Anthropic is on your critical path, document your contingency and monitor the outcome of the current negotiations.

Enterprise

The Pentagon’s supply chain risk designation for Anthropic is the most consequential governance development this week for enterprise CIOs. A designation of this kind from the DOD carries weight beyond defense contracting: it will be cited in procurement risk assessments, compliance audits, and vendor reviews across regulated industries. If Anthropic is embedded in your production stack, your legal and procurement teams need to assess whether that designation creates any exposure under your existing vendor risk management framework, regardless of whether you have federal contracts. The answer is probably no in most cases, but the question needs to be formally asked and documented.

GPT-5.4’s computer use capability introduces a governance category that most enterprise security frameworks have not yet addressed: AI agents with authenticated access to internal applications. If your teams begin deploying agents that can operate browsers, read spreadsheets, and move data between systems, you need session logging, permission scoping, and audit trails before those agents touch production systems. The productivity case will arrive before the governance framework does unless you build the framework proactively. Engage your security architecture team now, not after the first incident.

The Meta smart glasses lawsuit should be reviewed by your privacy counsel and your DPO if you operate in the EU. The specific mechanism at issue, footage collected by an AI hardware product being reviewed by offshore contractors without adequate user disclosure, is a pattern that appears in a wider range of AI products than most enterprises have mapped. Any AI vendor in your stack that processes audio, video, or sensor data from users or employees should be asked directly whether human reviewers access that data, under what conditions, and in which jurisdictions. The answers should be contractually binding and auditable.

In Brief

- Seven tech giants signed Trump’s data center power pledge. Google, Meta, Microsoft, Oracle, OpenAI, Amazon, and xAI committed to preventing AI infrastructure from spiking utility bills for residential consumers near new data centers.

- Dario Amodei called OpenAI’s statements about the Pentagon contract dispute “straight up lies.” The public accusation deepens the rift between the two labs as they now compete directly for defense contracts.

- Netflix acquired InterPositive, Ben Affleck’s AI filmmaking startup, absorbing 16 engineers. The deal focuses on post-production workflow tools rather than generative performance technology.

- Lio closed a $30 million Series A led by Andreessen Horowitz to automate enterprise procurement. The round signals growing institutional conviction that AI-driven back-office automation is entering a durable commercial phase.

- An open-source large genome model trained on trillions of DNA bases was released by researchers. The foundation model matches or exceeds specialized tools on gene identification and regulatory sequence tasks, with no proprietary access required.

- Meta’s AI glasses footage was reviewed by human contractors in Kenya, including sensitive and intimate content. Users had no visible indication their recordings were being accessed by offshore workers, according to Swedish investigative reporting.

- Cursor launched Automations, enabling AI agents to trigger automatically on code changes, Slack messages, or timers. The feature moves agentic coding from an on-demand tool into persistent background infrastructure for development teams.

Tool of the Day

Luma Agents is designed for creative professionals and content teams who need to produce coordinated outputs across text, image, video, and audio without managing a separate tool for each modality. A concrete use case: a marketing team briefing a product launch campaign can input a single creative direction and receive a draft that spans written copy, visual assets, and a video clip in a single workflow, reducing the back-and-forth between specialized tools and the people who operate them. The platform is built on Luma’s Unified Intelligence models, which handle cross-modal coordination internally rather than chaining separate APIs. It is most relevant for lean creative teams producing high volumes of varied content, where the overhead of tool switching and output alignment currently consumes a significant share of production time.